Test. Gate.

Ship Secure.

ArtsPlatform is the automated adversarial testing layer for LLM applications embedding directly into CI/CD pipelines so AI security becomes a repeatable, automated build step. We detect prompt injection, data leakage, and unsafe tool use before release, producing audit-ready evidence packs that satisfy engineering and governance teams alike.

spending in 2025

projected by 2030

LLM risk by OWASP LLM Top 10

full CI security pipeline access

From LLM Workflow to CI-Gated Security in Days

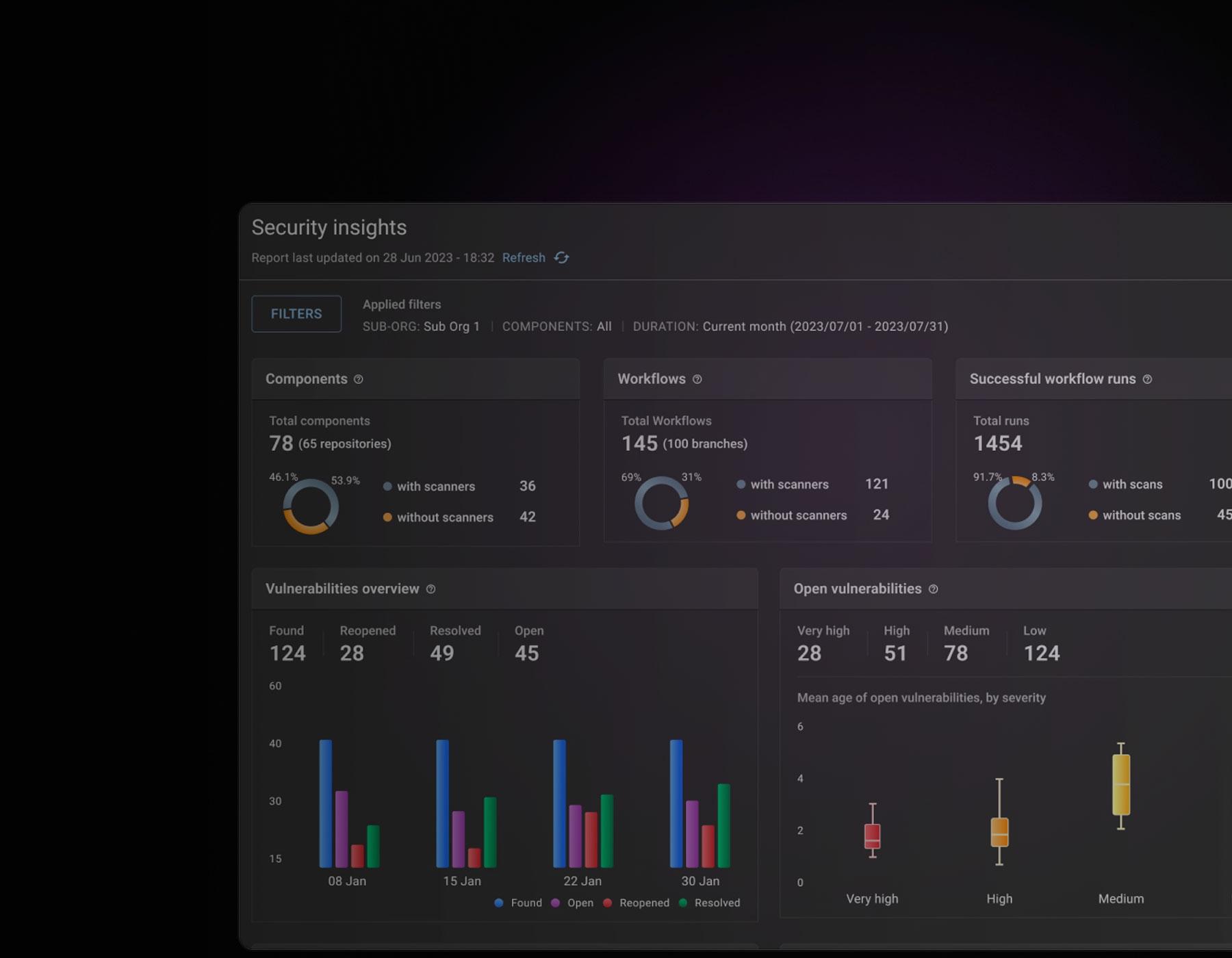

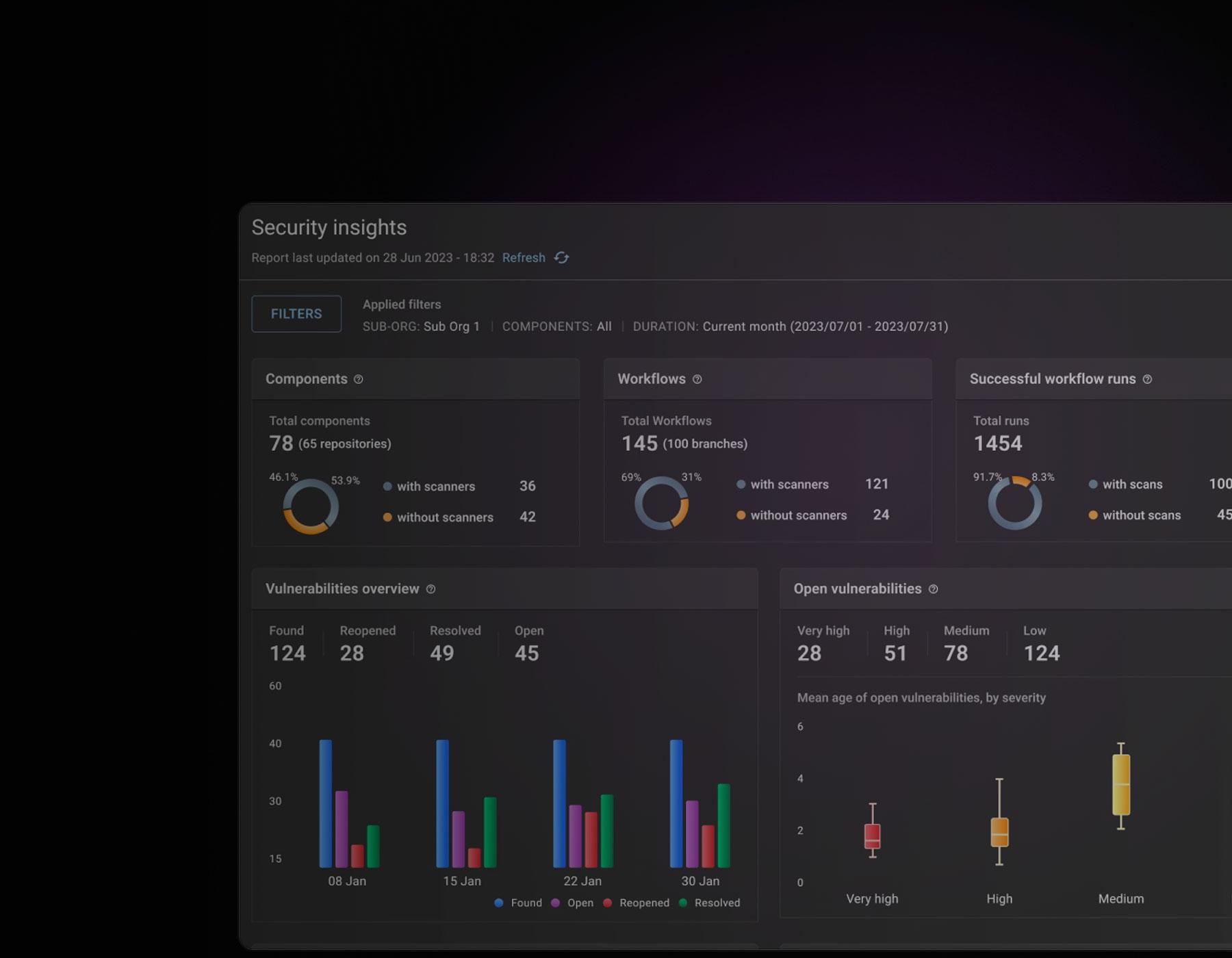

Every ArtsPlatform deployment follows a proven four-stage security integration journey from workflow mapping and threat compilation, to adversarial execution, evidence generation, and release gating all without requiring an in-house red team or AI security expertise from your engineering team.

Connect & Map

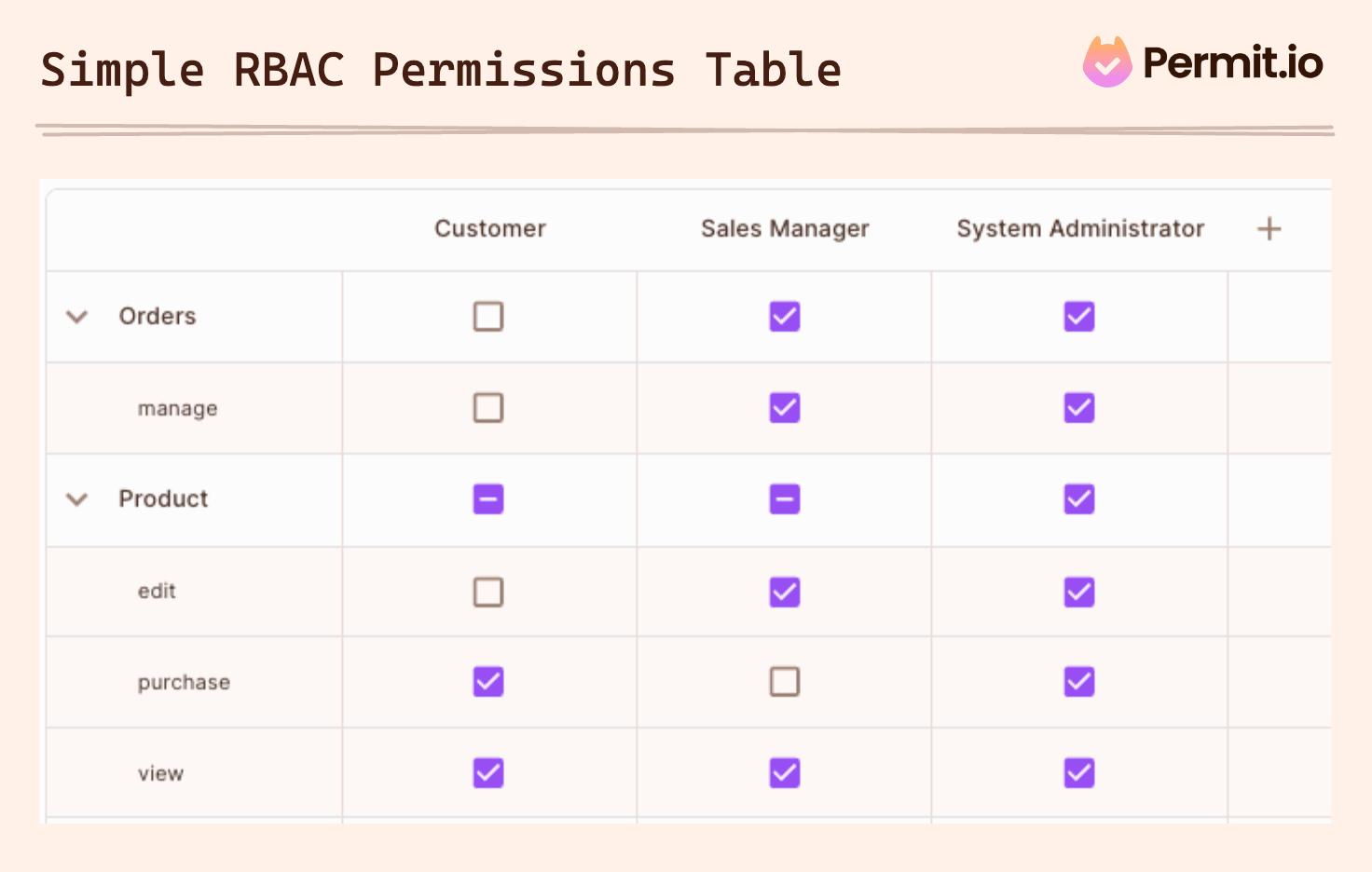

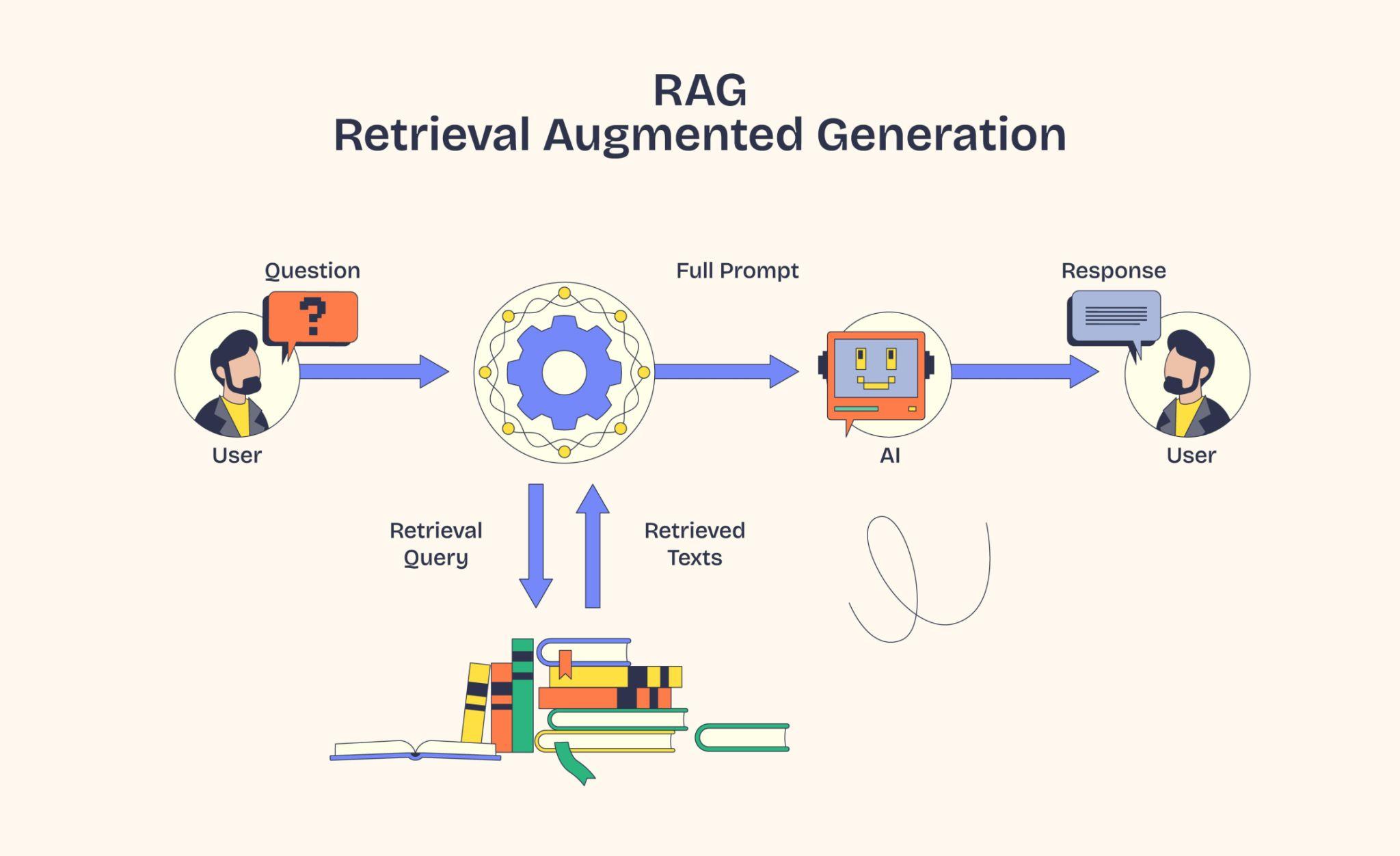

Integrate with your CI/CD pipeline (GitHub Actions, GitLab CI, Jenkins). Define your LLM workflow tools, RBAC roles, RAG sources, and system policies in a simple YAML config file.

Compile & Target

The Threat Model Compiler analyses your workflow context tools, permissions, data classes, retrieval sources and generates a targeted adversarial test plan specific to what your system can actually do.

Execute & Detect

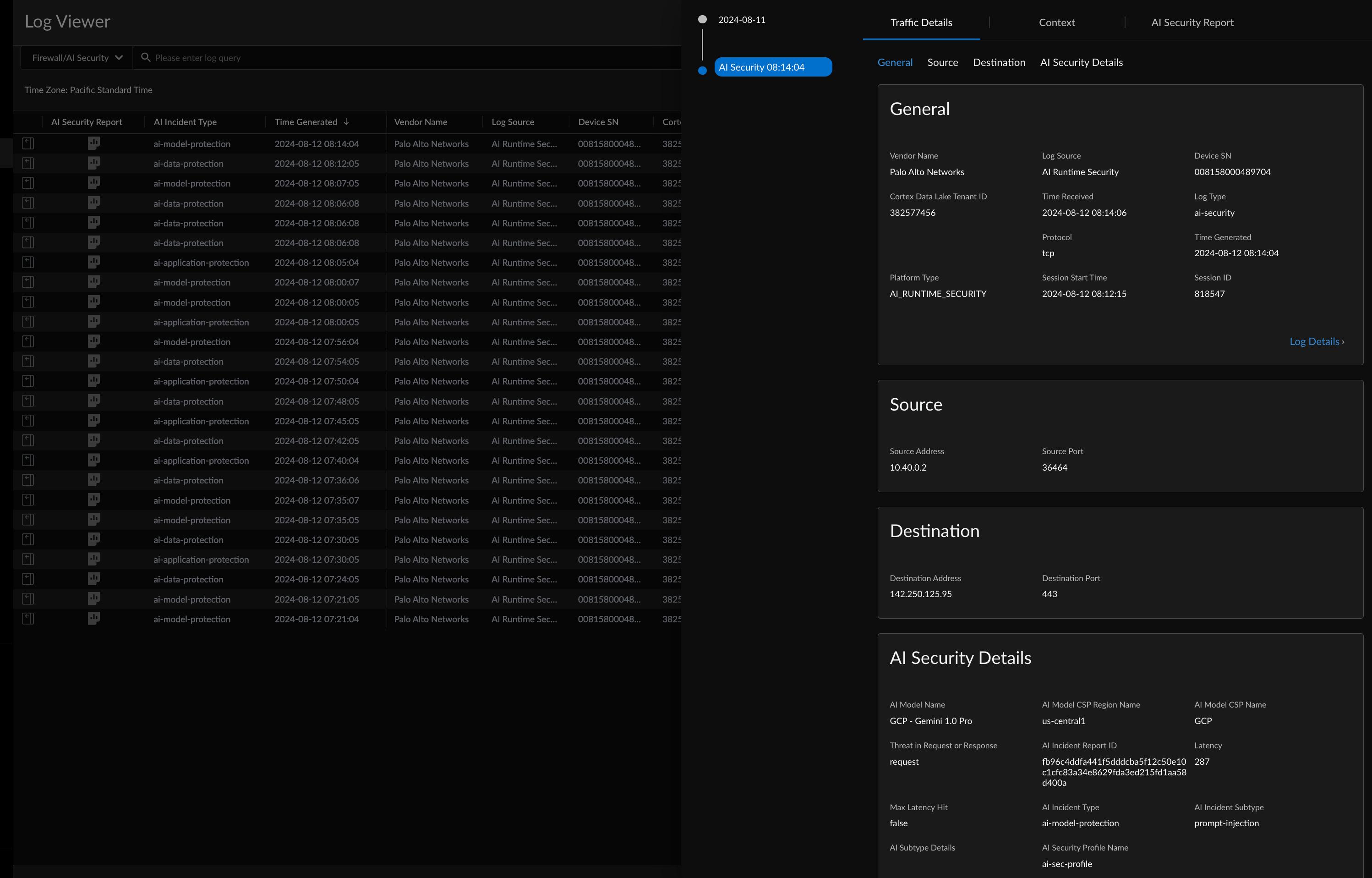

Our adaptive multi-turn attack engine executes injection attempts, leakage probes, and tool-abuse simulations against your staging environment, with response-conditioned branching and mutation for maximum coverage.

Gate & Evidence

A pass/fail CI gate blocks unsafe releases. Reproducible attack transcripts and audit-ready evidence packs are generated per run suitable for engineering review, governance teams, and procurement submissions.

Eight Modules. One LLM Security Intelligence Layer.

ArtsPlatform combines eight specialised security modules into a single CI/CD-native red-teaming platform the only system that unifies threat compilation, adversarial generation, multi-turn attack simulation, indirect injection testing, tool-abuse detection, leakage detection, risk scoring, and evidence pack generation into one integrated release gate for LLM applications.

Threat Model Compiler

Reads your workflow YAML tools, schemas, RBAC roles, RAG configuration, data sensitivity classes, and policy boundaries and compiles application-aware attack plans. Tests are specific to what your system can actually do.

Adversarial Test Generation

Adaptive fuzzing engine with response-conditioned branching refusal triggers reframe attempts, partial leaks trigger escalation. Mutation engine generates tone shifts, obfuscation variants, and multilingual payloads for maximum vulnerability discovery per CI minute.

Multi-Turn Attack Simulation

Simulates full conversation-level manipulation: establishing trust, reframing context as audit or debug, gradual erosion of refusals. Captures the social-engineering attack patterns that single-turn tests completely miss.